Real-time voice agents with Stream Vision Agents and Amazon Nova 2 Sonic

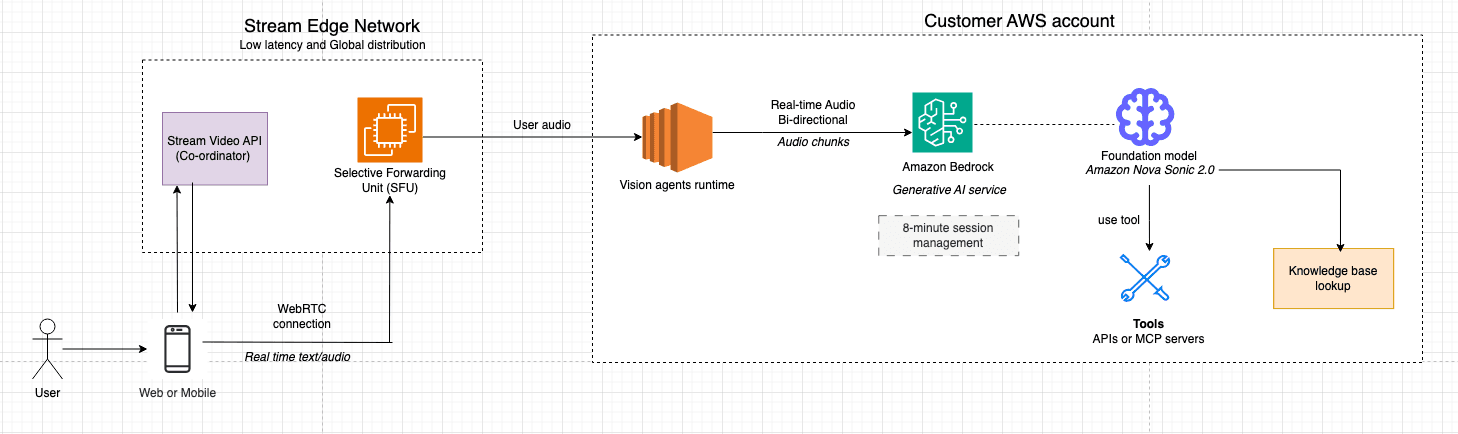

Artificial Intelligence Real-time voice agents with Stream Vision Agents and Amazon Nova 2 Sonic This post was co-authored with Neevash Ramdial, Technical Marketing leader at Stream Building production-grade voice agents that feel natural and responsive is a complex engineering challenge. You must orchestrate speech-to-speech models, manage low-latency audio streaming, and handle connection lifecycle. You also need to deliver consistent experiences across web, mobile, and desktop applications. In this post, you learn how to combine Stream’s Vision Agents open-source framework with Amazon Bedrock and Amazon Nova 2 Sonic to build real-time voice agents that can be production-ready in minutes. You’ll learn how the integration works under the hood, walk through code examples, and explore advanced capabilities like function calling, automatic reconnection, and multilingual voice support. The challenge Building voice-enabled AI applications requires orchestrating multiple complex systems that must work together reliably. You face the challenge of managing real-time audio streaming infrastructure while simultaneously integrating speech recognition, language models, and text-to-speech services. Each of these has its own latency characteristics and failure modes. A typical voice interaction involves capturing audio from the user’s microphone, streaming it to a speech-to-text service, processing the transcript through a language model, generating a response, converting that response back to speech, and delivering it to the user. All of this must happen within a window of a few hundred milliseconds to feel natural. Delays in this pipeline can break the conversational flow and frustrate users.Beyond the core AI pipeline, production voice applications must handle the messy realities of real-world deployment: unreliable network connections, browser compatibility issues, session timeouts, and graceful degradation when services become unavailable. You often spend more time building reconnection logic, managing WebRTC connections, and handling edge cases than on the actual AI capabilities. This infrastructure burden means teams either invest months building custom solutions or settle for limited off-the-shelf products that don’t meet their specific needs. Vision Agents abstracts the infrastructure complexity while providing the flexibility to customize the AI experience. Solution overview The solution brings together three key components: - Amazon Nova 2 Sonic a speech-to-speech foundation model available through Amazon Bedrock that provides real-time bidirectional audio streaming, native turn detection, and function calling capabilities. Nova 2 Sonic handles the full speech-to-speech pipeline, accepting audio input and producing audio output. This avoids the need for separate STT and TTS services. - Stream’s Vision Agents an open-source Python framework for building real-time voice and video AI agents. It provides a plugin-based architecture with 25+ integrations, production deployment tooling, and client SDKs for React, iOS, Android, Flutter, and React Native. The system is designed with flexibility at its core. You can use Stream’s global edge network for efficient performance or integrate your preferred real-time communication (RTC) provider. Vision Agents handles provider-specific specifications through a clean decorator-based interface, enabling use cases like customer support agents, workflow automation, and API-driven actions with minimal boilerplate code. With Vision Agents, you can build AI applications using an open-source framework, third-party model providers, and telephony services. - Stream’s Edge Network…