Run custom MCP proxies serverless on Amazon Bedrock AgentCore Runtime

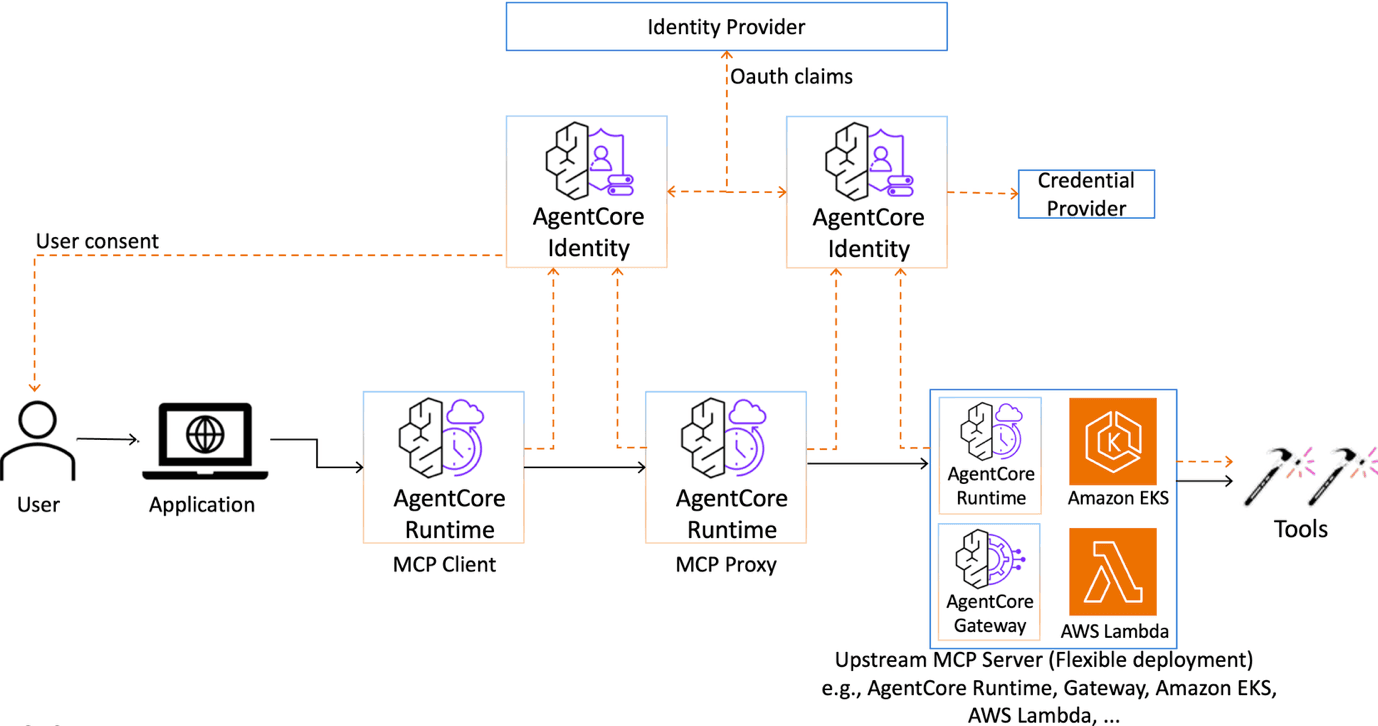

Artificial Intelligence Run custom MCP proxies serverless on Amazon Bedrock AgentCore Runtime When AI agents connect to tools through the Model Context Protocol (MCP), they gain access to capabilities that range from database queries and API calls to file operations and third-party service integrations. In production, these interactions need proper governance, controls, and observability aligned with an organization’s security policies. This includes sanitizing tool inputs before they reach backend systems, generating audit trails in specific formats, or redacting sensitive data at the protocol layer. These requirements are shaped by internal governance standards, industry regulations, and the specifics of each production environment. This post shows you how to deploy a serverless MCP proxy on Amazon Bedrock AgentCore Runtime that gives you a programmable layer to implement these controls. Amazon Bedrock AgentCore Gateway provides centralized governance and control for agent-tool integration, including semantic tool discovery, managed credentials, and policy enforcement. For organizations that need to embed custom logic in the Gateway request path, Gateway supports Lambda interceptors. These interceptors let you run validation, transformation, or filtering code as AWS Lambda functions on every tool invocation. This allows you to keep your custom logic self-contained and managed alongside your Gateway configuration. However, some organizations have invested in custom MCP filtering logic that is tightly coupled with internal libraries or on-premises compliance systems. They want to reuse that logic on AgentCore Runtime without refactoring it into Lambda functions. Others operate across multiple systems or hybrid environments where running controls as a standalone MCP server offers more portability than a system-specific interceptor. In these cases, a serverless MCP proxy running on AgentCore Runtime can provide a complementary pattern. AgentCore Runtime is a fully managed compute environment for deploying AI agents and MCP servers. It provides serverless infrastructure with automatic scaling, built-in observability through Amazon CloudWatch and OpenTelemetry, and AgentCore Identity for authentication and authorization. Because Runtime natively supports the MCP protocol, it allows you to host MCP servers, including MCP proxies that add custom controls to MCP traffic. We show you how to build and deploy a stateless MCP proxy on AgentCore Runtime that allows you to add programmable controls to MCP traffic. The proxy runs as a serverless workload on Runtime, discovers tools from an upstream MCP server at startup, re-exposes them with your custom logic applied, and forwards requests transparently. The upstream MCP server can be your choice of MCP-compatible endpoint, including MCP servers running on AgentCore Runtime, self-hosted MCP servers, or third-party MCP services. You can also connect this proxy to Amazon Bedrock AgentCore Gateway. This lets you take advantage of Gateway’s managed tool discovery, credential management, and policy enforcement across MCP servers, Lambda functions, and SaaS integrations. Using an open source GitHub implementation as a foundation, we walk you through the architecture, explain how authorization works at each layer, deploy the proxy with an automated script, and test the end-to-end flow with a sample agent. By the end, you have a working deployment pattern for adding custom controls to MCP traffic…