Build Strands Agents with SageMaker AI models and MLflow

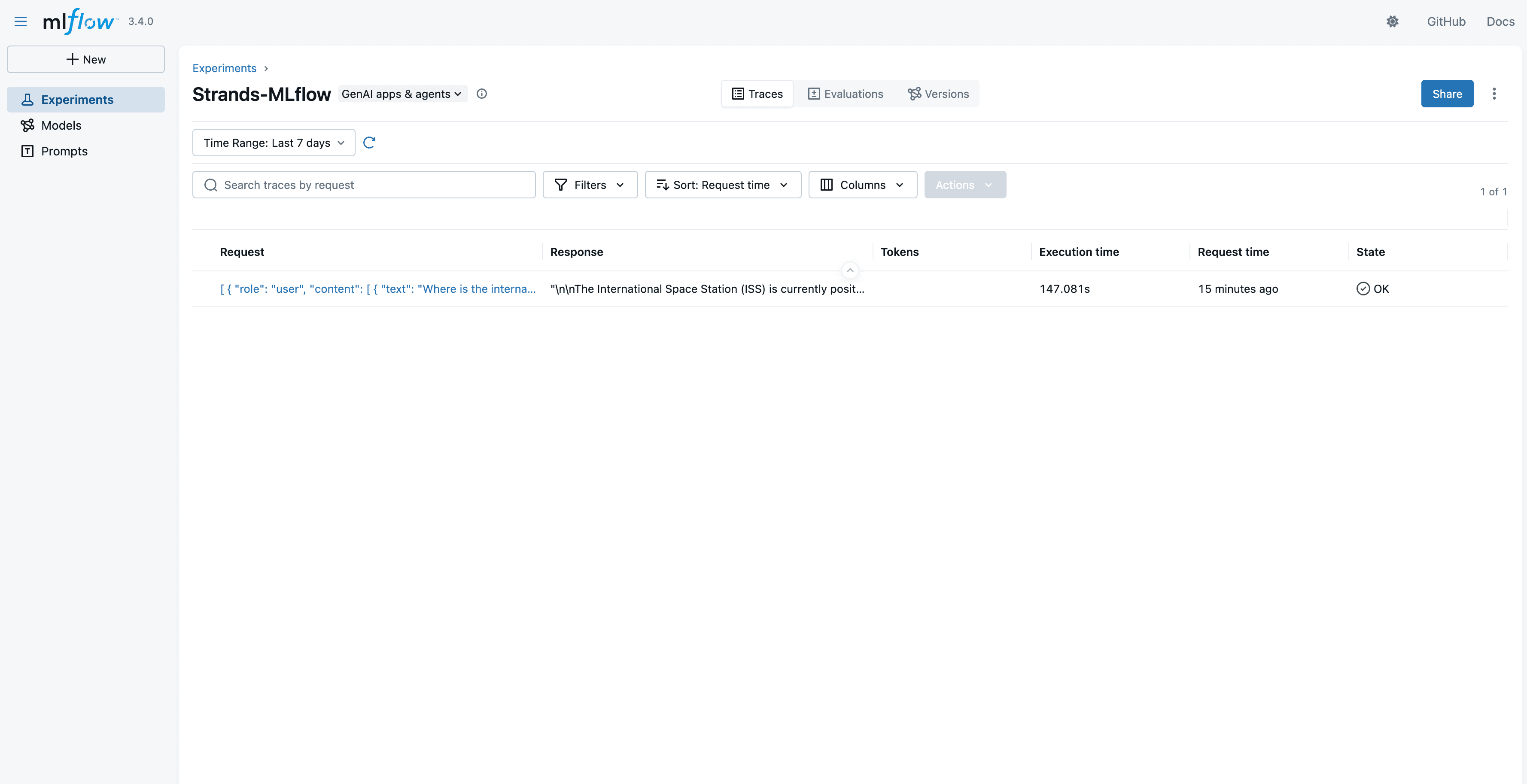

Artificial Intelligence Build Strands Agents with SageMaker AI models and MLflow Enterprises building AI agents often require more than what managed foundation model (FM) services can provide. They need precise control over performance tuning, cost optimization at scale, compliance and data residency, model selection, and networking configurations that integrate with existing security architectures. Amazon SageMaker AI endpoints align with these requirements by giving organizations control over compute resources, scaling behavior, and infrastructure placement, while benefiting from the managed operational layer of AWS. These models that are deployed by SageMaker AI, can power AI agents, handle conversational workloads, and integrate with orchestration frameworks like the FMs that are available on Amazon Bedrock. The difference is that the organization retains architectural control over how and where inference happens. In this post, we demonstrate how to build AI agents using Strands Agents SDK with models deployed on SageMaker AI endpoints. You will learn how to deploy foundation models from SageMaker JumpStart, integrate them with Strands Agents, and establish production-grade observability using SageMaker Serverless MLflow for agent tracing. We also cover how to implement A/B testing across multiple model variants and evaluate agent performance using MLflow metrics and show how you can build, deploy, and continuously improve AI agents on infrastructure you control. Strands Agents SDK is an open source SDK that takes a model-driven approach to building and running AI agents in only a few lines of code. Strands scales from simple to complex agent use cases, and from local development to deployment in production. Amazon SageMaker JumpStart is a machine learning (ML) hub that can help you accelerate your ML journey. With SageMaker JumpStart, you can evaluate, compare, and select FMs quickly based on predefined quality and responsibility metrics to perform tasks, like article summarization and image generation. SageMaker AI MLflow is a managed capability that streamlines the machine learning lifecycle through experiment tracking, model versioning, and deployment management. In this post we: - Deploy models on SageMaker AI – Deploy foundation models from SageMaker JumpStart. - Integrate Strands with SageMaker AI – Use deployed SageMaker AI models with Strands Agents. - Set up Agent Observability – Configure SageMaker AI MLflow App for agent tracing. - Implement A/B Testing with evaluation – Deploy multiple model variants and evaluate agent with MLflow metrics. A Jupyter notebook with complete code to use with this post can be found in the GitHub repo. Building your first Strands Agent Strands agents put together a model, a system prompt, and a set of tools to build a simple AI agent. Strands offers many model providers, including Amazon SageMaker AI. It also provides many commonly used tools as part of strands-agent-tools SDK, so organizations can quickly build AI agents for their business needs. The following code snippet shows how to create your first agent using the Strands Agents SDK. A detailed sample of agents built using Strands Agents SDK can be found in the GitHub repo. This agent uses Claude 4.5 Sonnet multi-Region inference model on Amazon Bedrock.…