Migrating a text agent to a voice assistant with Amazon Nova 2 Sonic

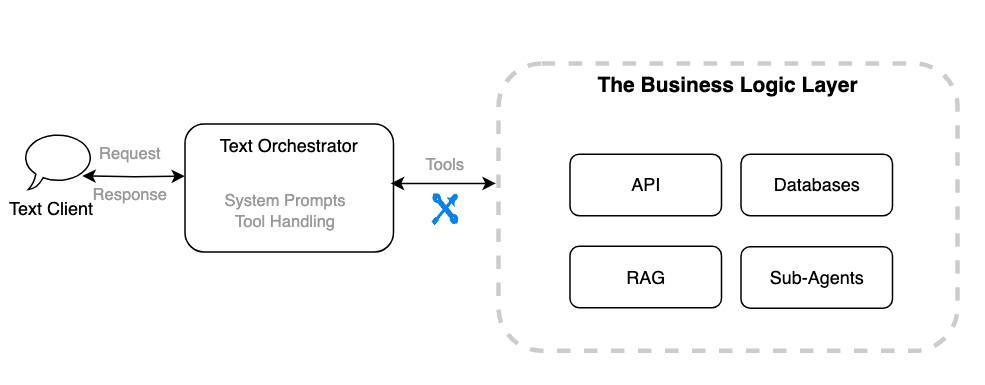

Artificial Intelligence Migrating a text agent to a voice assistant with Amazon Nova 2 Sonic Migrating a text agent to a voice assistant is increasingly important because users expect faster, more natural interactions. Instead of typing, customers want to speak and understand in real time. Industries like finance, healthcare, education, social media, and retail are exploring solutions with Amazon Nova 2 Sonic to enable natural, real-time speech interactions at scale. In this post, we explore what it takes to migrate a traditional text agent into a conversational voice assistant using Amazon Nova 2 Sonic. We compare text and voice agent requirements, highlight design priorities for different use cases, break down agent architecture, and address common concerns like tools and sub-agents for reuse and system prompt adaptation. This post helps you navigate the migration process and avoid common pitfalls. You can also find a Skill in the Nova sample repo that works with AI IDEs like Kiro and Claude Code to automatically convert your text agent into a voice agent. Text agents and voice agents aren’t the same problem While migrating from a text agent to a voice assistant might seem like adding a voice interface while keeping the business logic unchanged, it’s important to understand the differences from the following perspectives. To better navigate these challenges, let’s break down the key differences between text agents and voice assistants and how those differences impact design and implementation. Response design A text agent is built to deliver paragraphs that users can read at their own pace. Scrolling back, copying content, and following links as needed. A voice agent operates in a fundamentally different medium. Responses must be conversational, concise, and carefully structured for listening rather than reading.Consider a banking agent that returns account information: Text agent response: Voice agent response: “You have three accounts. Your checking account ends in 4521 with a balance of three thousand two hundred forty-five dollars. Want me to go through the others or would you like details on this one?”. The voice agent breaks information into digestible chunks and asks for confirmation before continuing. It uses an autonomous conversation style, proactively guiding the user rather than dumping everything at once. Latency budget Text users have mid-latency tolerance. They see a typing indicator and wait. Voice users notice delays almost immediately. Silence in a voice conversation feels like the line went dead. This changes how agents must be architected: Amazon Nova 2 Sonic supports asynchronous tool calling, so the conversation continues naturally while tools run in the background. It keeps accepting input, can run multiple tools in parallel, and gracefully adapts if the user changes their request mid-process, delivering all results while focusing on what’s still relevant. Turn-taking and interruption Text conversations are inherently turn-based. The user types, hits enter, waits for a response. Voice conversations are fluid. Users interrupt (barge-in), pause mid-sentence, and expect the agent to handle overlapping speech naturally.Native speech-to-speech models like Amazon Nova 2 Sonic handle this internally with built-in voice activity detection (VAD)…