How the NVIDIA Vera Rubin Platform is Solving Agentic AI’s Scale-Up Problem

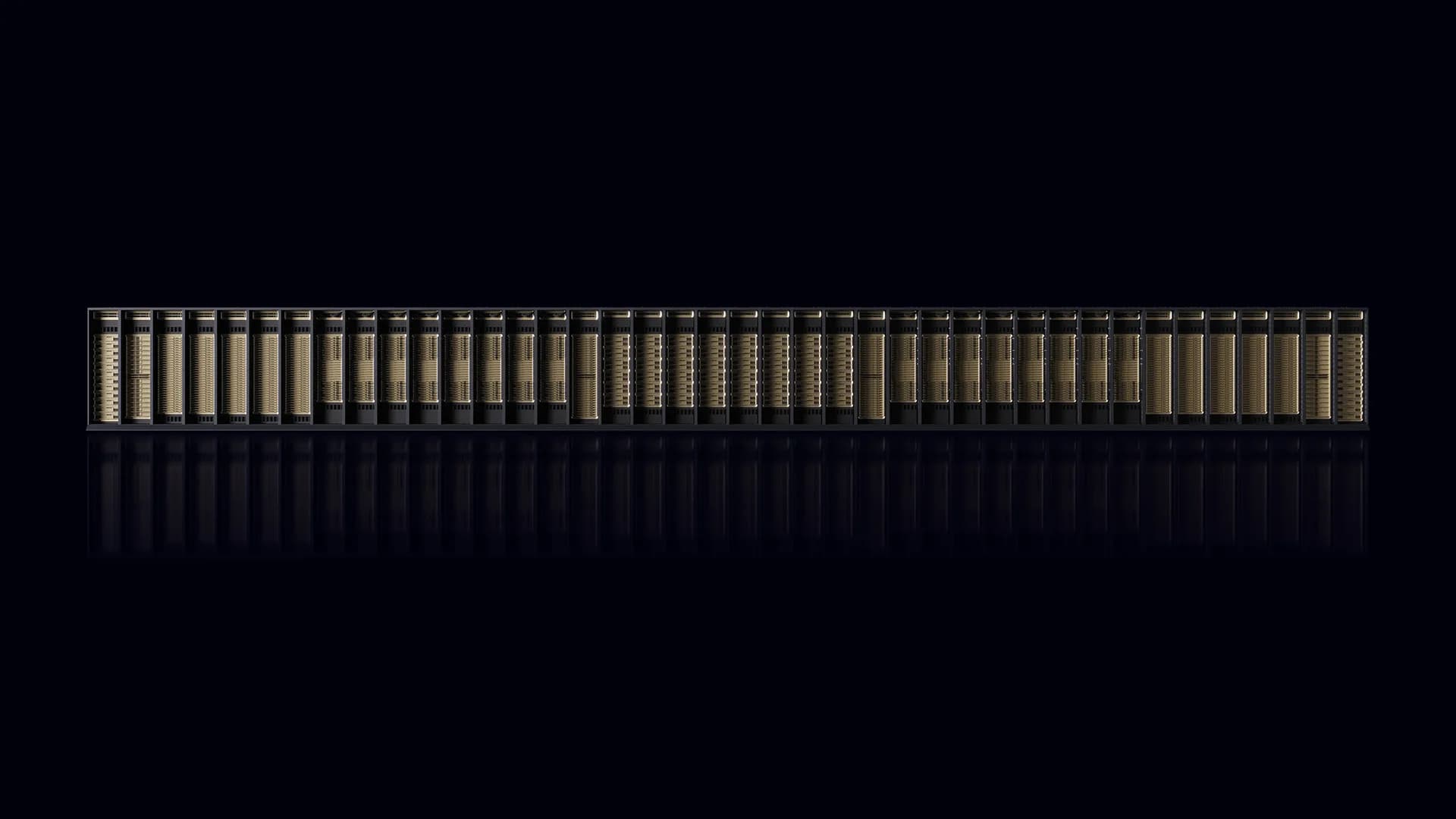

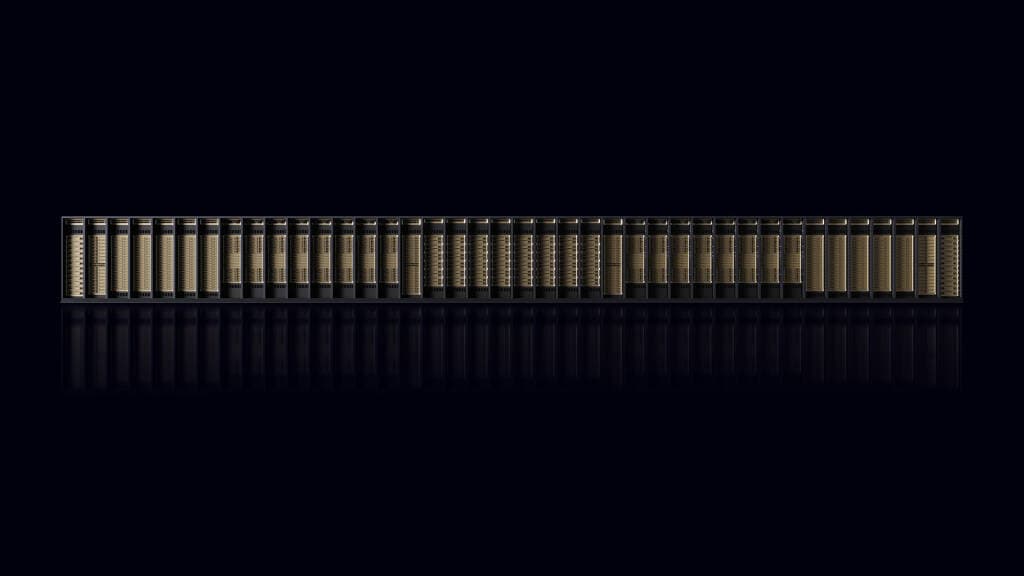

Agentic inference has fundamentally changed the runtime dynamics of inference workloads by introducing non-deterministic trajectories—actions, observations, and decisions that an AI agent produces while working through a task. These trajectories compound end-to-end latency across hundreds of inference requests per session. NVIDIA Vera Rubin NVL72 handles the bulk of that inference load as the core compute engine of the NVIDIA Vera Rubin platform. The most demanding emerging multi-agent workloads require sustained low-latency and high-throughput generation on trillion-parameter MoE models with long-context windows. Until now, no platform has served this emerging workload economically. NVIDIA Groq 3 LPX, paired with Vera Rubin NVL72, is the first to deliver both high throughput and low latency at this point on the Pareto curve. This post explores how the NVIDIA Vera Rubin Platform solves this challenge through extreme co-design, combining high-throughput compute with low-latency, deterministic execution across hundreds to thousands of chips. Why agentic workloads require predictable scale-up networking Conventional data center networking fabrics are optimized for large training jobs and volume inference workloads, where small amounts of network jitter average out inside large batches. Premium AI services, by contrast, demand higher model capability and highly responsive user-visible performance. At this tier, agentic decode brings a fundamentally different set of requirements, including: - Multi-turn model requests - Smaller batches - Extremely low latency Long context and large MoE models (used in premium AI services) introduce additional networking challenges (Figure 1). Each agent in a multi-agent pipeline carries its own expanding KV cache, system prompt, tool definitions, and conversation history. That KV cache and any new tokens must be routed through trillion-parameter models and their associated experts across different accelerators. To pull this off, network-level orchestration must ensure minimal variability in the hops between chips. This cross-chip exchange is unavoidable in any SRAM-based architecture that can’t hold the model on a single chip. The physical mechanism by which the exchange occurs becomes a key bottleneck in the serving system. The industry has traditionally addressed this challenge by using: - Runtime-arbitrated networking fabrics where flow control is reactive, and timing is statistically bounded rather than guaranteed. - Large concentrations of on-die compute and memory that postpone the networking problem until model and context window sizes require them to scale up and out, resulting in deteriorating multi-chip performance. Breaking the throughput-latency tradeoff at agentic scale requires networking fabric designed with the silicon, compiler, and serving stack. The LPU C2C achieves this with extreme co-design enabling multi-trillion parameter models at scale. How NVIDIA Groq 3 LPX addresses scale-up challenges The NVIDIA Groq 3 LPX LPU C2C is designed for solving scale-up problems directly. Rather than treating interconnects as a conventional network that must absorb contention and timing uncertainty at runtime, LPU C2C extends Groq’s deterministic execution model across many LPUs. It does this through three tightly connected technologies: - High-radix point-to-point links - LPU Compiler-scheduled data movement - Hardware-driven plesiosynchronous timing Together, these technologies enable Groq 3 LPU accelerators the flexibility to scale to thousands of chips while preserving predictable…