Granite 4.1 LLMs: How They’re Built

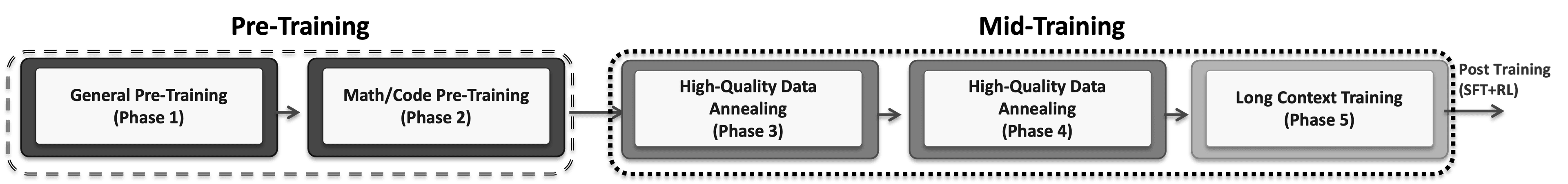

Granite 4.1 LLMs: How They’re Built Authors: Granite Team, IBM TL;DR — Granite 4.1 is a family of dense, decoder‑only LLMs (3B, 8B, and 30B) trained on ~15T tokens using a multi‑stage pre‑training pipeline, including long‑context extension of up to 512K tokens. The models are further refined with supervised fine‑tuning on ~4.1M high‑quality curated samples and reinforcement learning via on‑policy GRPO with DAPO loss (Yu et al., 2025). Notably, the 8B instruct model matches or surpasses the previous Granite 4.0‑H‑Small (32B‑A9B MoE) despite using a simpler dense architecture with fewer parameters. All Granite 4.1 models are released under the Apache 2.0 license. Links: Overview Building high‑quality small language models goes beyond simply scaling compute—it requires rigorous data curation throughout training. For Granite 4.1, we prioritized data quality over quantity, progressively refining the data mixture across five pre‑training stages. We further curated supervised fine‑tuning data using an LLM‑as‑Judge framework and applied a multi‑stage reinforcement learning pipeline to systematically strengthen performance in math, coding, instruction following, and general chat. Model Architecture Granite 4.1 models use a decoder-only dense transformer architecture. The core design choices include Grouped Query Attention (GQA), Rotary Position Embeddings (RoPE), SwiGLU activations, RMSNorm, and shared input/output embeddings. All three model sizes share the same training pipeline and data strategy, differing only in architecture dimensions. Pre-Training Granite 4.1 is trained from scratch on approximately 15 trillion tokens using a five‑phase training strategy. Phases 1–2 focus on foundational pre‑training, phases 3–4 perform mid‑training with progressively higher‑quality data annealing, and phase 5 introduces long‑context training, extending the context window to 512K tokens. Each phase employs a distinct data mixture and learning‑rate schedule, gradually shifting from broad web‑scale data to more curated, domain‑specific content. Figure 2: The five-phase pre-training pipeline. Phases 1–2 are pre-training, Phases 3–4 are mid-training (high-quality data annealing), and Phase 5 is long context training (LCE). Phase 1: General Pre-Training (10T tokens) The first phase establishes broad language understanding using a general mixture of training data with a power learning rate schedule and warmup. Data composition: - CommonCrawl ~59% — general web data - Code ~20% — programming languages and repositories - Math ~7% — mathematical reasoning data - Technical ~10.5% — scientific papers, technical documentation and manuals - Multilingual ~2% — non-English language data - Domain Specific ~1.5% — domain-specific content Phase 2: Math/Code Pre-Training (2T tokens) Phase 2 sharply increases the proportion of code and mathematical data, pivoting toward stronger reasoning capabilities while still maintaining general language coverage. Data composition: - Math ~35% — a 5x increase over Phase 1 - Code ~30% — a 1.5x increase - CommonCrawl-HQ ~12% — high-quality common crawl subset - Synthetic ~9% — synthetic high-quality data - Technical ~10% - Multilingual ~3% - Domain ~1% Phase 3: High-Quality Data Annealing (2T tokens) Phase 3 transitions into mid-training with a more balanced, high-quality mixture and an exponential decay learning rate schedule. This is where we start blending in chain-of-thought and synthetic instruction data. Data composition: - CommonCrawl-HQ ~16.67% - Math ~16.67% - Code…