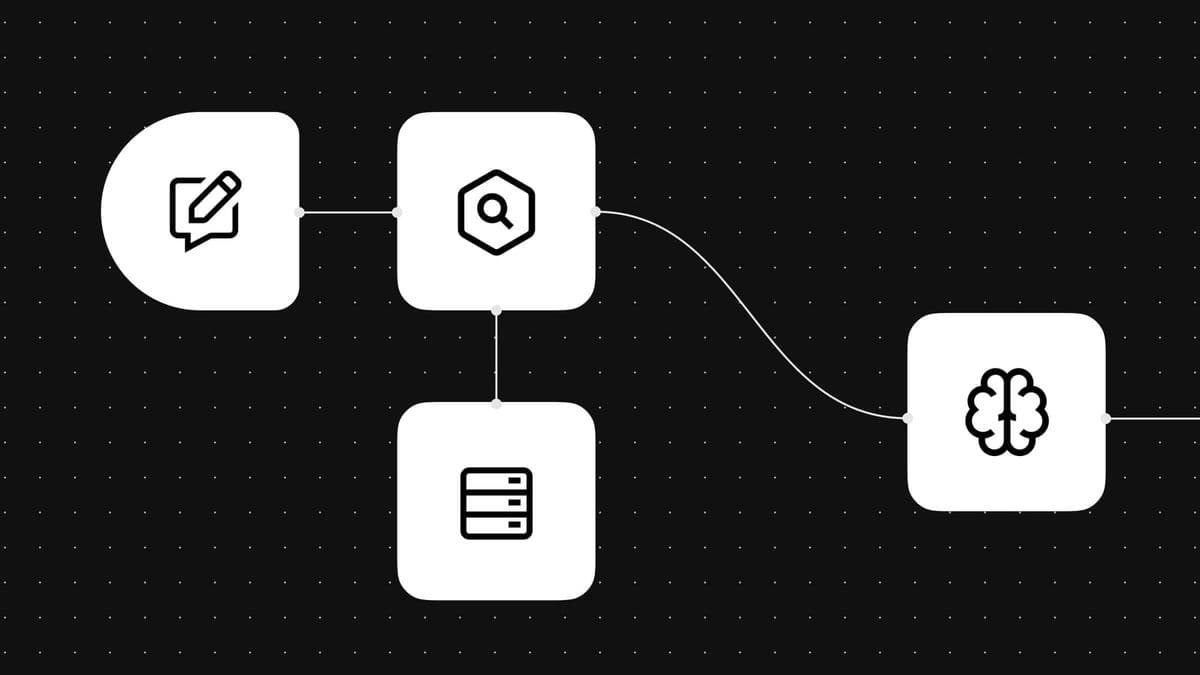

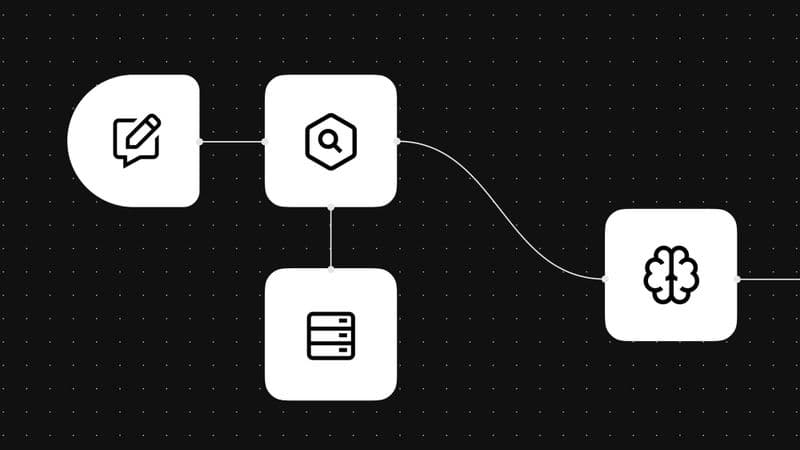

Advanced RAG: Data Cleaning and Retrieval Techniques

Retrieval-augmented generation (RAG) makes queries smarter, arming them with proprietary data and contextualized knowledge. But even the best RAG methods produce inaccurate answers, and context windows polluted by noisy data. Advanced RAG emerged to fix that. RAG isn’t a single method — there are several ways to boost the accuracy and reliability of LLM outputs with this framework. This guide covers the advanced LLM RAG techniques teams use in production. Why does basic RAG fall short? Basic RAG is sometimes called Naive RAG because of its simple nature. It indexes a set of documents via a single dense vector, and then embeds them, retrieves the top-K matches, and passes them to an LLM. Simple RAG in LLM systems works well in some scenarios, but they often struggle in real-world use. Here are a few common limitations: - Poor recall: It doesn’t have enough information to answer the query within the same domain, so it gives inaccurate or incomplete answers. - Hallucinations: It retrieves insufficient or noisy information and gives unsupported answers. - Ignored middle: It prioritizes the beginning and end of a query, leading to the omission of relevant context when the chunks are long. - Poor domain knowledge: It is not tailored to specific knowledge domains, so the LLM returns data lacking important nuance. - Superficiality: It does not have enough data to satisfy the query, so it loops back in the data it has and creates a repetitive output. Naive RAG isn’t entirely reliable in how it retrieves, structures, and generates data. Advanced RAG techniques are specifically designed to address these gaps. Advanced RAG techniques with LLMs Moving beyond the basics RAG is not a simple upgrade — you need to figure out where something went wrong in the RAG pipeline. Here are techniques to fix problems before, during, and after retrieval. Pre-retrieval and data-indexing techniques Cleaning data at the indexing stage improves the process before the first query. Let’s take a look at some pre-retrieval methods. Increase information density using LLMs LLMs are a quick way to pre-process data. They can create summaries, increase information density by removing redundancies, and design document-relevant hypothetical questions. By transforming raw text into optimized formats, you ensure the retrieval system presents essential information, not fluff. Data chunking You can use RAG chunking to process sections of data instead of entire documents. There’s no single way to execute this — both large and small chunks can improve retrieval. You can also implement unique methods like sliding windows and hierarchical chunking. LangChain’s recursive text splitter is a widely used starting point, automatically breaking down paragraphs, sentences, and characters. Self-query RAG Enriching a chunk with metadata — like author, topic, and timestamp — is known as self-query RAG. This technique can improve the relevance and recency of information at runtime. Retrieval techniques Even well-indexed documents won’t return high-quality answers if retrieval fails. Use the following techniques to improve your fetching process. Hybrid search This method pairs the contextual nuance of dense vector search with…