Reinforcement fine-tuning with LLM-as-a-judge

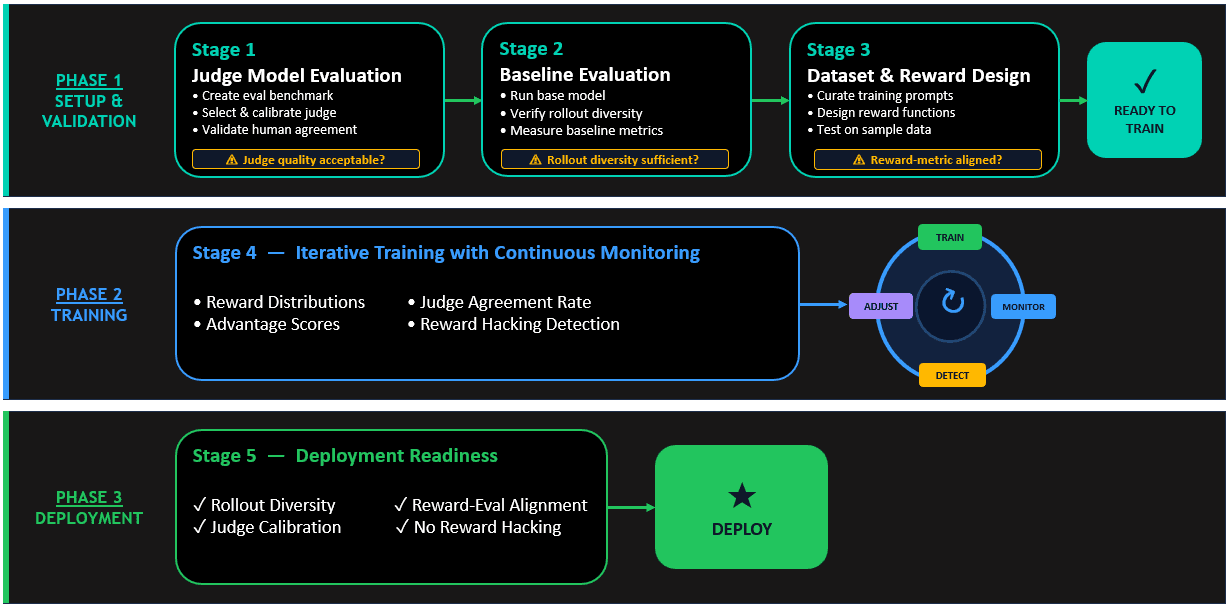

Artificial Intelligence Reinforcement fine-tuning with LLM-as-a-judge Large language models (LLMs) now drive the most advanced conversational agents, creative tools, and decision-support systems. However, their raw output often contains inaccuracies, policy misalignments, or unhelpful phrasing—issues that undermine trust and limit real-world utility. Reinforcement Fine‑Tuning (RFT) has emerged as the preferred method to align these models efficiently, using automated reward signals to replace costly manual labeling. At the heart of modern RFT is reward functions. They’re built for each domain through verifiable reward functions that can score LLM generations through a piece of code (Reinforcement Learning with Verifiable Rewards or RLVR) or with LLM-as-a-judge, where a separate language model evaluates candidate responses to guide alignment (Reinforcement Learning with AI Feedback or RLAIF). Both these methods provide scores to the RL algorithm to nudge the model to solve the problem at hand. In this post, we take a deeper look at how RLAIF or RL with LLM-as-a-judge works with Amazon Nova models effectively. Why RFT with LLM‑as‑a-judge compared to generic RFT? Reinforcement Fine-Tuning can use any reward signal, straightforward hand‑crafted rules (RLVR), or an LLM that evaluates model outputs (LLM-as-a-judge or RLAIF). RLAIF makes alignment far more flexible and powerful, especially when reward signals are vague and hard to craft manually. Unlike generic RFT rewards that rely on blunt numeric scoring like substring matching, an LLM judge reasons across multiple dimensions—correctness, tone, safety, relevance—providing context-aware feedback that captures subtleties and domain-specific nuances without task-specific retraining. Additionally, LLM judges offer built-in explainability through rationales (for example, “Response A cites peer-reviewed studies”), providing diagnostics that accelerate iteration, pinpoint failure modes directly, and reduce hidden misalignments, something static reward functions can’t do. Implementing LLM-as-a-judge: Six critical steps This section covers the key steps involved in designing and deploying LLM-as-a-judge reward functions. Select the judge architecture The first critical decision is selecting your judge architecture. LLM-as-a-judge offers two primary evaluation modes: Rubric-based (point- based) judging and Preference-based judging, each suited to different alignment scenarios. Define your evaluation criteria After you’ve selected your judge type, articulate the specific dimensions that you want to improve. Clear evaluation criteria are the foundation of effective RLAIF training. For Preference-based judges: Write clear prompts explaining what makes one response better than another. Be explicit about quality preferences with concrete examples. Example: “Prefer responses that cite authoritative sources, use accessible language, and directly address the user’s question.” For Rubric-based judges: We recommend using Boolean (pass/fail) scoring for rubric-based judges. Boolean scoring is more reliable and reduces judge variability compared to fine-grained 1–10 scales. Define clear pass/fail criteria for each evaluation dimension with specific, observable characteristics. Select and configure your judge model Choose an LLM with sufficient reasoning capability to evaluate your target domain, configured through Amazon Bedrock and called using a reward AWS Lambda function. For common domains like math, coding, and conversational capabilities, smaller models can work well with careful prompt engineering. Refine your judge model prompt Your judge prompt is the foundation of alignment quality. Design it to produce structured, parseable…