Power video semantic search with Amazon Nova Multimodal Embeddings

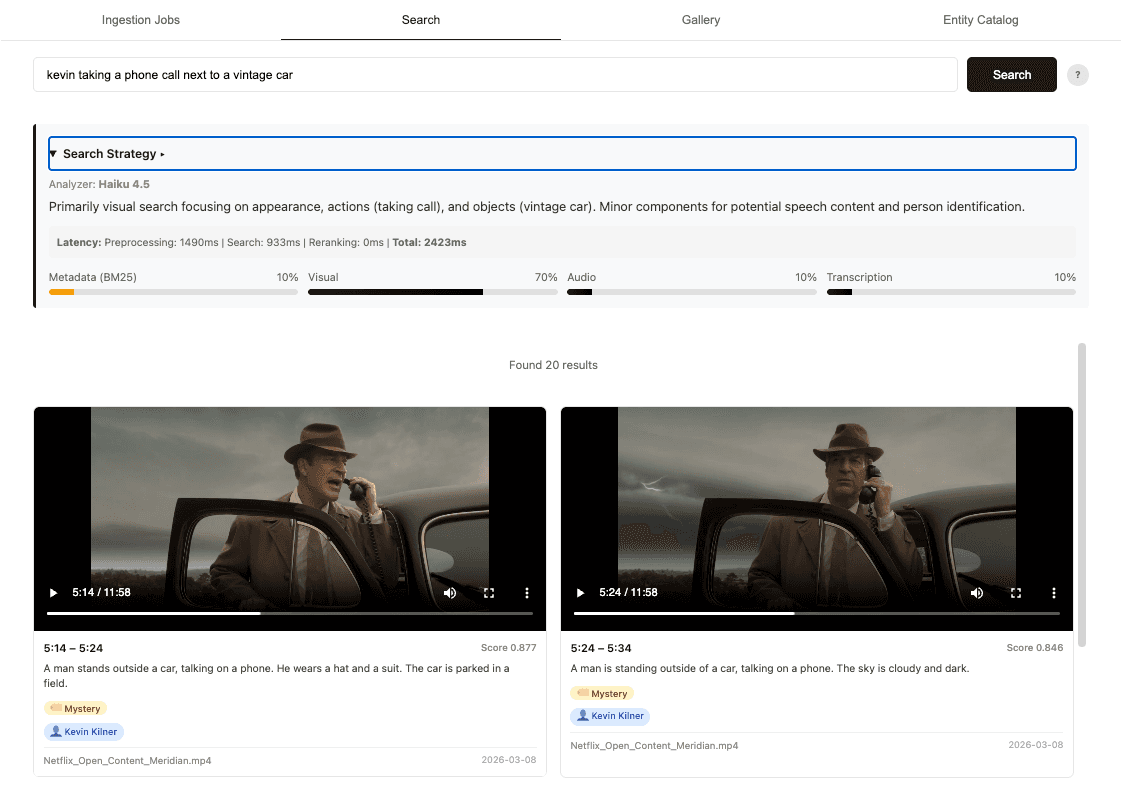

Artificial Intelligence Power video semantic search with Amazon Nova Multimodal Embeddings Video semantic search is unlocking new value across industries. The demand for video-first experiences is reshaping how organizations deliver content, and customers expect fast, accurate access to specific moments within video. For example, sports broadcasters need to surface the exact moment a player scored to deliver highlight clips to fans instantly. Studios need to find every scene featuring a specific actor across thousands of hours of archived content to create personalized trailers and promotional content. News organizations need to retrieve footage by mood, location, or event to publish breaking stories faster than competitors. The goal is the same: deliver video content to end users quickly, capture the moment, and monetize the experience. Video is naturally more complex than other modalities like text or image because it amalgamates multiple unstructured signals: the visual scene unfolding on screen, the ambient audio and sound effects, the spoken dialogue, the temporal information, and the structured metadata describing the asset. A user searching for “a tense car chase with sirens” is asking about a visual event and an audio event at the same time. A user searching for a specific athlete by name may be looking for someone who appears prominently on screen but is never spoken aloud. The dominant approach today grounds all video signals into text, whether through transcription, manual tagging, or automated captioning, and then applies text embeddings for search. While this works for dialogue-heavy content, converting video to text inevitably loses critical information. Temporal understanding disappears, and transcription errors emerge from visual and audio quality issues. What if you had a model that could process all modalities and directly map them into a single searchable representation without losing detail? Amazon Nova Multimodal Embeddings is a unified embedding model that natively processes text, documents, images, video, and audio into a shared semantic vector space. It delivers leading retrieval accuracy and cost efficiency. In this post, we show you how to build a video semantic search solution on Amazon Bedrock using Nova Multimodal Embeddings that intelligently understands user intent and retrieves accurate video results across all signal types simultaneously. We also share a reference implementation you can deploy and explore with your own content. Solution overview We built our solution on Nova Multimodal Embeddings combined with an intelligent hybrid search architecture that fuses semantic and lexical signals across all video modalities. Lexical search matches exact keywords and phrases, while semantic search understands meaning and context. We will explain our choice of this hybrid approach and its performance benefits in later sections. The architecture consists of two phases: an ingestion pipeline (steps 1-6) that processes video into searchable embeddings, and a search pipeline (steps 7-10) that routes user queries intelligently across those representations and merges results into a ranked list. Here are details for each of the steps: - Upload – Videos uploaded via browser are stored in Amazon Simple Storage Service (Amazon S3), triggering the Orchestrator AWS Lambda to update Amazon DynamoDB…