Optimize video semantic search intent with Amazon Nova Model Distillation on Amazon Bedrock

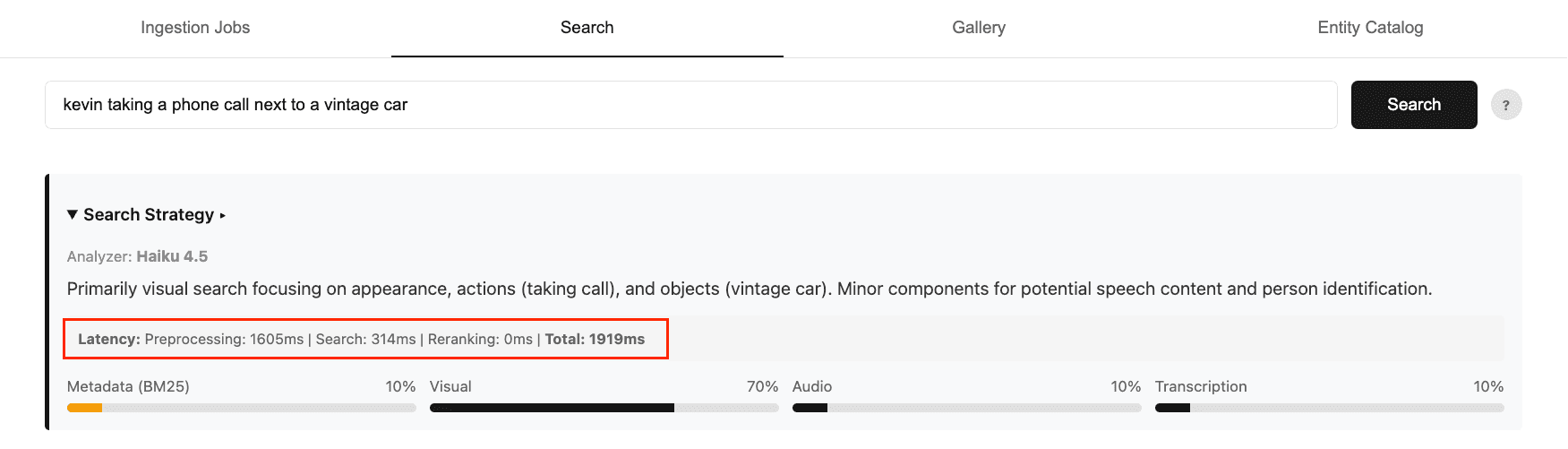

Artificial Intelligence Optimize video semantic search intent with Amazon Nova Model Distillation on Amazon Bedrock Optimizing models for video semantic search requires balancing accuracy, cost, and latency. Faster, smaller models lack routing intelligence, while larger, accurate models add significant latency overhead. In Part 1 of this series, we showed how to build a multimodal video semantic search system on AWS with intelligent intent routing using the Anthropic Claude Haiku model in Amazon Bedrock. While the Haiku model delivers strong accuracy for user search intent, it increases end-to-end search time to 2-4 seconds. This contributes to 75% of the overall latency. Now consider what happens as the routing logic grows more complex. Enterprise metadata can be far more complex than the five attributes in our example (title, caption, people, genre, and timestamp). Customers may factor in camera angles, mood and sentiment, licensing and rights windows, and more domain-specific taxonomies. More nuanced logic means a more demanding prompt, and a more demanding prompt leads to more expensive and slower responses. This is where model customization comes in. Rather than choosing between a model that’s fast but too simple or one that’s accurate but too expensive or too slow, we can achieve all three by training a small model to perform the task accurately at much lower latency and cost. In this post, we show you how to use Model Distillation, a model customization technique on Amazon Bedrock, to transfer routing intelligence from a large teacher model (Amazon Nova Premier) into a much smaller student model (Amazon Nova Micro). This approach cuts inference cost by over 95% and reduces latency by 50% while maintaining the nuanced routing quality that the task demands. Solution overview We will walk through the full distillation pipeline end to end in a Jupyter notebook. At a high level, the notebook contains the following steps: - Prepare training data — 10,000 synthetic labeled examples using Nova Premier and upload the dataset to Amazon Simple Storage Service (Amazon S3) in Bedrock distillation format - Run distillation training job — Configure the job with teacher and student model identifiers and submit via Amazon Bedrock - Deploy the distilled model — Deploy the custom model using on-demand inference for flexible, pay-per-use access - Evaluate the distilled model — Compare routing quality against the base Nova Micro and the original Claude Haiku baseline using Amazon Bedrock Model Evaluation The complete notebook, training data generation script, and evaluation utilities are available in the GitHub repository. Prepare training data One of the key reasons we chose model distillation over other customization techniques like supervised fine-tuning (SFT) is that it does not require a fully labeled dataset. With SFT, every training example needs a human-generated response as ground truth. With distillation, you only need prompts. Amazon Bedrock automatically invokes the teacher model to generate high-quality responses. It applies data synthesis and augmentation techniques behind the scenes to produce a diverse training dataset of up to 15,000 prompt-response pairs. That said, you can optionally provide a labeled…