Ecom-RLVE: Adaptive Verifiable Environments for E-Commerce Conversational Agents

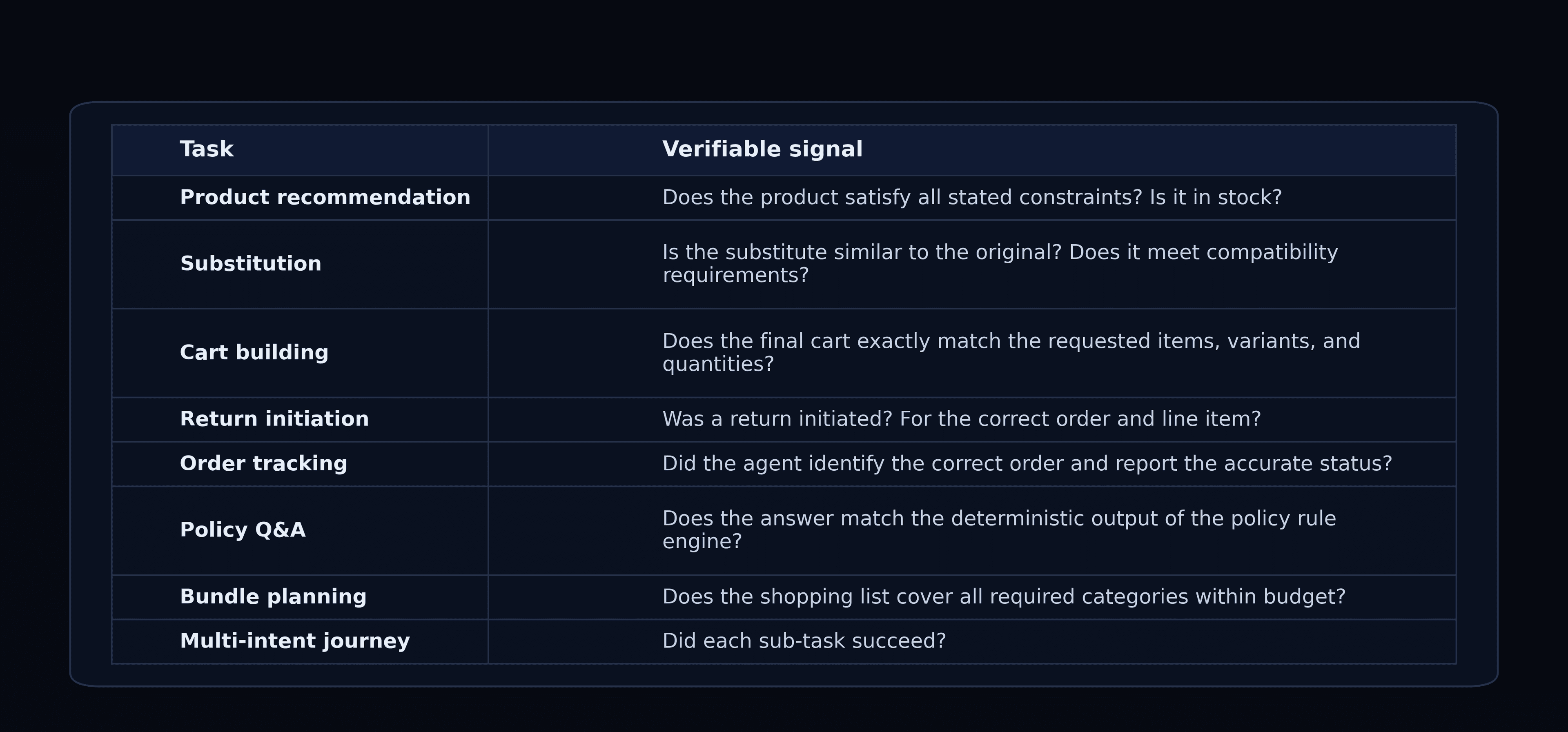

Ecom-RLVE: Adaptive Verifiable Environments for E-Commerce Conversational Agents TL;DR — We extend the RLVE framework from single-turn reasoning puzzles to multi-turn, tool-augmented e-commerce conversations. EcomRLVE-GYM provides 8 verifiable environments — product discovery, substitution, cart building, returns, order tracking, policy QA, bundle planning, and multi-intent journeys — each with procedural problem generation, a 12-axis difficulty curriculum, and algorithmically verifiable rewards. We train a Qwen 3 8B model with DAPO over 300 steps and present early results demonstrating that environment scaling and adaptive difficulty transfer to agentic, real-world task completion. This project originated in the Pytorch OpenEnv Hackathon and is still evolving, follow us for updates 🔥 Why RL for shopping agents? Large language models can hold fluent conversations, yet deploying them as shopping assistants reveals a persistent gap: fluency ≠ task completion. A customer who asks "find me a USB-C charger under $25 that ships in two days" needs an agent that invokes the right catalog search, filters on three hard constraints, avoids hallucinating product IDs it never retrieved, and handles follow-ups when the top result goes out of stock. Supervised fine-tuning can teach surface-level tool use from demonstrations, but it cannot scale to the combinatorial space of constraint configurations, partial-information dialogues, and multi-step transactional workflows that real e-commerce demands. Reinforcement learning with verifiable rewards (RLVR) offers an alternative: the agent optimises for outcomes — did the products satisfy the constraints? Was the cart correct? Was the return initiated for the right order line? The challenge is constructing reward functions that are both verifiable (no LLM-as-a-judge subjectivity) and adaptive (difficulty that grows with the policy's capability). From RLVE-Gym to EcomRLVE-GYM RLVE-Gym provides 400 environments for sorting, multiplication, Sudoku, and other algorithmic-reasoning tasks; however, those are all single-turn, text-in / text-out puzzles — extending to agentic domains was left as future work. EcomRLVE-GYM fills that gap: we stay in the verifiable regime (e-commerce outcomes can be checked algorithmically) while extending to multi-turn, tool-augmented, agentic conversations — environments where the agent must act (call tools, modify world state) rather than merely reason (produce a text answer) and compensates for the deficiency of the search system. EcomRLVE-GYM transforms customer-service outcomes structurally verifiable: Every signal above can be evaluated by a program with access to the hidden ground-truth goal. No human annotation or LLM-as-a-judge is needed. What a training episode looks like Before we explain the framework, here is what a single EcomRLVE episode looks like at difficulty d = 4 . The environment generates a hidden goal, a simulated user opens the chat, and the agent must use tools to satisfy the request. Every action is verified algorithmically — no LLM judge required. The reward is fully computed by code: F1 over (product, variant, qty) tuples, an efficiency bonus for finishing in fewer turns, and a hallucination check that every recommended product ID was actually retrieved. If the agent had picked the Lightning variant instead of USB-C, the simulated user would have corrected it mid-dialogue — and the F1 would have dropped. The eight…